Technical SEO is the process of optimizing a website’s technical aspects so that search engines like Google, Bing and others can efficiently crawl, index, render the pages and rank the site’s content.

It is an important aspect of digital marketing because if search engines can’t properly crawl or index your site, it won’t appear in search results for your target keywords, no matter how great the content is.

While often overlooked because of a lack of technical knowledge or understanding of its importance, a technical SEO program is essential for delivering the desired results from all your content marketing and link-building activities.

In this blog, we will provide a high-level view of elements and tools used in a technical SEO audit so you can have meaningful conversations with your website or search engine optimization team.

Key components of technical SEO include…

- Crawling and indexing: Ensuring that search engine bots can easily find your website’s pages.

- Linking: Adding links to internal and external content.

- Site speed: Optimizing page load times, especially on mobile devices, for better rankings and user experience.

- Secure website (HTTPS): Using SSL certificates to ensure secure data transmission.

- Structured data markup: Using schema.org or other formats to help search engines understand the context of the content.

- XML sitemaps: Providing a roadmap for search engines to follow and help them find all essential pages on your site.

- Robots.txt file: Ensuring the robots.txt file is correctly configured to guide search engines on which pages to crawl or ignore.

- URL structure: Creating clean, user-friendly, and search engine-friendly URLs.

- Canonicalization: Preventing duplicate content issues by specifying a page’s “canonical” version.

- Fixing 404 errors: Addressing broken links and error pages to ensure that all pages are accessible and provide a good user experience.

Website crawler

Website crawlers, also known as web crawlers, spiders, or bots, are tools search engines use to scrape the Internet and index websites systematically.

Technical SEO professionals also use them to mimic search engine crawlers. They are useful for auditing websites, as they allow you to see your site the way a search engine crawler would and look for potential issues (like broken links and missing metadata) that may prevent search engine bots from indexing your pages.

Most SEO tools have some sort of crawling ability, but not all crawlers are created equal. Many technical SEO professionals rely on multiple crawlers to ensure that all issues are being caught.

Examples of website crawling tools available to SEO professionals for technical SEO audits include Screaming Frog, Semrush, JetOctopus and Sitebulb.

When selecting a website crawler, look for one that gets updated regularly. Also, look for one that allows you to monitor your site’s health continuously and provides an easy way to view and track issues so you can systematically solve the right ones.

Google Chrome’s Website Inspector

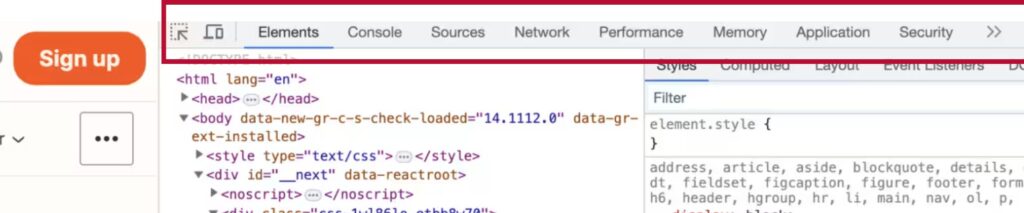

Google Chrome’s Website Inspector, also called Chrome Dev Tools, is useful for technical SEO.

To access the inspector, press F12 or CTRL+Shift+J on a Windows computer or Command. On a Mac, use Option+Shift+C. You can also right-click on any page and then choose Inspect to be taken right to the code for that element in the tools.

The options across the top are typically Elements, Console, Sources, Network, Performance, Memory, Application, Security, Lighthouse, Recorder, and Performance insights.

Where to find Chrome's website inspector.

In the Elements area you can look at the code of a page. This is a great way to check if the page is outlined correctly for headings, for example.

Console is useful if you’re doing lots of JavaScript or analytics work.

Checking site speed

The Sources, Network, and Performance sections are all helpful for site speed. The Sources tab shows you all the domains called to build the page. This is helpful to review because it can show you many things, like if your website loads many different domains, making the site load slowly.

The Network tab will show you every element called to build the current webpage. You’ll want to check for elements that do not have a status of 200, as that can definitely slow the site, especially if an element is returning a 404 Not Found code.

The Performance tab gives more in-depth information about how elements in the page load and how they factor into overall site speed.

In the Security section, you want to ensure the site has a valid and secure certificate.

Website testing

Finally, Lighthouse is the name for Google’s automated tool for webpage testing. It will simulate your page loading on a slow connection and look for accessibility in SEO issues. It’s a great place to start your technical SEO audit.

Experts recommend that you run Lighthouse in incognito mode. This way, you’ll avoid any issues with Chrome plugins you may have installed that could affect the report’s outcome.

Speed testing for technical SEO

Site speed is a critical part of technical SEO. It impacts your ability to rank, user experience and conversion rates.

Speed testing tools are available to help you identify any issues on your site. Google’s PageSpeed Insights Tool or PSI is one of the first tools that people turn to. It gives you insights into how Google will judge your site and their core algorithm. For more information on PSI metrics, watch Google’s core web vitals resources offered by Google.

GTmetrix is another tool for identifying site speed issues. It’s free, but paid options allow you to monitor larger sites more frequently.

Many experts recommend running your site(s) through multiple tools to see how they interpret results and get a different perspective.

You’ll likely see in the results of all of these speed tools that your JavaScript files have issues. They’re either too large, taking too long to process, or holding things up in another way. Yellow Lab Tools is a free tool that helps developers identify issues in their JavaScript.

Finally, use Google Search Console’s core web vital section and the page experience section to see how your site is performing. It won’t give you results for every single page and the number of results you get depends on how popular your site is, but this is the best way to know what Google is seeing.

Role of robots.txt file

Robots.txt files are text files that live in the root of your website. You can check if your website has one by going to your domain.dot/robots.txt.

Robots.txt files tell search engine crawlers what pages or files they can or cannot access on your website (disallow, and/or allow instructions). This is useful for keeping robots out of areas where they won’t find anything useful, such as the WP Admin Directory in WordPress.

Many CMS, such as WordPress, automatically create one, but that doesn’t mean it’s set up correctly. Here is an example of what your robots.txt file may look like:

User-agent: * Disallow: / User-agent: Googlebot Disallow:

User-agent: bingbot

Disallow: /not-for-bing/

Directives like Allow and Disallow should not be case-sensitive, but the values are case-sensitive. This means that /photo/ is different than /Photo/.

As the above example shows, you can add multiple sets of user agents. Not putting anything after the disallow for Googlebot means they’re free to look at the entire site, but Bing can’t look at the not-for-bing folder.

Notice that relative folders are used instead of the full URL. You don’t want to use complete URLs in these commands.

Wildcards and regular expressions

The star is a wild card that can match multiple URLs at once, while the dollar sign command indicates the end of a URL.

In the example below, any file ending with php or those in the copyrighted-images folder that end with jpg (not JPG) will be disallowed.

For the line with the $ sign, /index.php can’t be indexed, but /index.php?p=1 could be. This could be used to disallow any PDF files that end with the PDF extension.

Disallow: /*.php Disallow: /copyrighted-images/*.jpg Disallow: /*.php$

More robots.txt file tips

Experts recommend that every site have a robots.txt file. When building your file, ensure there are no conflicting rules. Make sure that the robots.txt file is named in all lowercase.

Once your robots.txt file is created, test it using Google’s robots.txt tester, and check for errors. This can be found in Google Search Console under Settings.

Log file analysis

Log file analysis is a technical SEO tool for determining which search engine bots visited your site and what they did while there. This can help you isolate issues and determine what you need to work on.

You can typically get server logs from your web host. To analyze them, you’ll need to use a tool like Screaming Frog’s. It will:

- Identify crawled URLs and crawl frequency

- Find broken links and errors

- Allow you to audit redirects

- Analyze your most and least crawled URLs and directories of the site.

- Identify large and slow pages

- Find uncrawled and orphan Pages

It’s important to check your log files periodically as part of your technical SEO, especially if you suspect that your website is not ranking well or you suspect there may be a problem.

Google’s Core Web Vitals

According to Google, the probability of a bounce increases by 32% as page load time increases from 1 second to 3 seconds. Making your website fast will help make your website successful.

Google has made speed metrics part of its page experience score and ranking algorithm. It has also provided a clear set of metrics for optimizing sites. Called Core Web Vitals, the three measures used are:

- Largest Contentful Paint (LCP) – measures the page’s loading performance.

- Interaction to Next Paint (INP) – how long the page takes to respond to a user interaction.

- Cumulative Layout Shift (CLS) – the amount of shifting on screen as the page loads.

Google used to include First Input Delay, or FID, in their list of Core Web Vitals, but that was changed in early 2024, and FID was replaced with INP.

You can see your Core Web Vital scores in Google Search Console, but it doesn’t tell you how to fix any issues. To determine the specific problems, you’ll need to run your site through a speed testing tool.

Technical SEO tools to consider using include Google’s Lighthouse, Google PageSpeed Insights, and GTmetrix.

More about LCP

Largest Contentful Paint (LCP) measures how long it takes for the largest content element that’s visible in the viewport to be loaded. The viewport is what you see on the screen when you load up a website.

A good LCP score is less than 2.5 seconds, a needs improvement score is between 2.5 seconds and four seconds, and a poor LCP score is anything above four seconds.

Typically, an LCP element is a banner image; sometimes, it can be a particularly large block of text. The goal with LCP is to prioritize the loading so this text comes in as fast as possible, even if other things are loading outside the viewport.

Examining LCP issues

GTmetrix is a helpful tool for examining LCP issues (under the Waterfall tab). However, you should be cautious about deferring any element that will cause objects on the page to move around as the page loads, which could negatively impact your CLS score.

You may also find that when you run your site through GTmetrix, you have a poor response time from the server. If you see a slow time (anything over 500 milliseconds), that’s a hosting issue, and you may need to switch up (or threaten to switch) web hosts.

JavaScripts, including third-party scripts used by chat widgets, loading early or loading all the page’s images at once can sometimes cause a poor LCP. Delaying scripts or using lazy load for images further down the page later can help improve LCP.

Finally, minify (minimize code and markup) your CSS and JavaScript and ensure you load only what you need on every page.

Remember not to just focus on the largest element on the page. Instead, see what you can do to ensure the large element loads as fast as possible.

More about INP

Interaction to Next Paint (INP) replaced First Input Delay (FID), providing a more accurate sense of how quickly website pages visually respond to a mouse click, a screen tap (for touchscreen devices) and a keyboard press.

For example, when you click an Add To Cart button or submit a form, how long does it take before your visitor sees a visual confirmation that the action has occurred?

Google does not consider scrolling, unless you use the keyboard.

When measuring INP, good is less than 200 milliseconds; needs improvement is between 200 milliseconds and 500 milliseconds; and poor is anything above 500 milliseconds.

INP is difficult to measure using speed testing tools. Instead, look at Total Blocking Time, or TBT, which measures how long the webpage was blocked from receiving user input because it was busy loading other elements on the page. Speed testing tools can measure TBT.

Google won’t gather information on every INP that happens on a page, and then pick the lowest score for your page. One slow item could make your INP score fall into the poor zone.

There are a lot of developer-led fixes for INP issues, but ensuring that the code is efficient and responsive when a user interacts with it is important.

More about CLS

Cumulative layout shift (CLS) measures how much your site shifted around when it was loading. It happens when individual website elements such as images, text boxes, or ads push other items around once they’re loaded onto the website.

CLS differs from other core web vitals metrics in that it doesn’t have a unit; it’s just a number. Good is less than 0.1; Needs improvement is between 0.1 and 0.25; and Poor is more than 0.25.

CLS fixes can be very simple. It is typically fixed by being specific with image, text, and ad box sizes.

Sites that serve ads may struggle with this. Ensure you’re reserving sufficient space in your design for ads so other elements don’t get pushed around when they load.

Best practices for website architecture

A well-planned website architecture is an important aspect of technical SEO as it makes it easy for your visitors to find what they need and for search engines to understand your site.

An ideal website is structured like a pyramid, with the home page at the top and all subfolders and pages supporting the base.

Signal the most important pages

Just because your homepage is at the top does not mean that it is the most important page. A well-organized and optimized website would result in all visitors starting on the page most relevant to their search terms. But you do need to link your most important pages to your homepage.

Your menu typically forms the basis for understanding your site structure. You don’t need to put every page on your top navigation menu. Some items can be placed on a secondary menu or in the footer.

For example, careers pages are commonly put in the footer as search engines will know to return that page for careers-focused searches, with no need to spend navigation menu space on it.

Using crawlers for site architecture

Something you should do with your site is crawl the site and see how the structure looks in the crawler.

Doing a crawl and viewing your site in a tree map can immediately help you determine whether its structure follows a logical pattern or has issues.

In addition to ensuring that folders and pages are correctly organized, ensure that the names of your folders and pages make sense. Ideally, they should match closely with the types of keywords being searched for.

Role of headings

Finally, consider your heading tags part of good website architecture. Think of them as a subcomponent of your page, which is a subcomponent of your folder.

By extending your website architecture review down to the heading level, you are indicating relevance on a page-by-page basis.

Plan early

Make website architecture planning part of the website development process. It’s much easier to fix poor architecture before the site is launched.

If your site is already live, try crawling your website’s architecture and looking for possible areas of improvement.

Internal linking for technical SEO

Internal linking refers to links that point back to other pages on your own site instead of other websites.

Without internal linking, you’re hoping that your navigation website architecture will do all the heavy lifting when it comes to emphasizing which pages are most important on your website.

The problem with relying on your website architecture is that every page can end up with the same number of links since every page is linked to your navigation. This will negatively impact your website’s ability to rank well.

Getting started

When you’re starting to correct your internal linking, first, consider the site’s most important pages. To link to those pages, read the content and find phrases that naturally lend themselves to linking to the page.

Use your research to find the keywords you want your target page to rank for, and then try to use that text when you link back to that page. But don’t be spammy and use the same keyword 10 times in a row. Remember, you’re still writing for humans.

Ongoing internal linking

Of course, like all good technical SEO practices, internal linking should be incorporated into the content creation process instead of added after the fact.

You could include internal linking in your content checklist, making it a natural part of editing. Include a list of the most important pages so writers can reference them as they create their content.

Don’t go overboard with your internal linking. Pages with hundreds or thousands of links will probably get ignored unless they’re very important. Plus, your content will likely be challenging to read if every other word is linked.

Once you’ve crawled your site, check your internal links and work on increasing the number of internal (and external links) on your most important pages.

When to use redirects

After reviewing your website’s architecture and existing content, you may realize that some pages will need to be changed or removed. This means you may need to redirect the old URLs to the new URLs.

You will need to choose between a 301 or permanent redirect or a 302 and temporary redirect. Most of the time, you choose a 301 redirect as the URL is permanently changed.

302 redirects are used less. For example, if you have a promotion, you are A/B testing a page or live testing a page.

301 redirects

301 redirects are mainly used when there is a change in the URL. Another reason is a more extensive content cleanup.

Let’s say you’re working on a site for a heating and cooling company and they were offering plumbing services, but they’ve decided to stop offering that. Of course, you’ll need to get rid of all the pages about plumbing, but should you redirect them?

In this case, you don’t want to redirect them because this isn’t something they offer any longer. You want to signal to Google that you no longer wish to have content on this topic and are removing it from your site.

Another scenario is you have multiple pages all covering the same topic, and none of them rank well. If you combine the content into one page, you’ll want to keep the URL from the most successful page and redirect the other pages to this winning page.

What to avoid

For redirects, avoid getting too far off-topic. If you have multiple posts on scrambled eggs, it makes sense to redirect them to one page about scrambled eggs.

However, redirecting multiple pages with recipes that include eggs to a page on scrambled eggs may not make much sense.

You also want to avoid creating chained redirects, where one redirect goes to another, and so on. This will annoy website visitors since the page will load more slowly. From a technical SEO perspective, search engine crawlers may not follow chained redirects to find the final destination.

Anytime you set up redirects, test them to ensure they reach the right destination page and avoid redirect chains.

Understanding schemas

Schema (or schema markup or structured data) is the language search engines use to understand website content.

In addition to helping search engines interpret and index your website content (and improve your site’s visibility in search results), schemas enable websites to generate rich snippets, enhancing search results.

These can include additional information such as ratings, reviews, prices, event dates, and more, making your listing stand out and increasing click-through rates.

Schema markup also provides context to a page’s content. For example, it can distinguish between a product review and a general article about the product or between a person’s name and a job title.

Implementing schemas

Schemas are typically implemented using a format called JSON-LD, and below is an example of a schema markup of a person:

<script type="application/ld+json">

{

"@context": "https://schema.org",

"@type": "Person",

"address": {

"@type": "PostalAddress",

"addressLocality": "Colorado Springs",

"addressRegion": "CO",

"postalCode": "80840",

"streetAddress": "100 Main Street"

},

"colleague": [

"http://www.example.com/JohnColleague.html",

"http://www.example.com/JameColleague.html"

],

"email": "info@example.com",

"image": "janedoe.jpg",

"jobTitle": "Research Assistant",

"name": "Jane Doe",

"alumniOf": "Dartmouth",

"birthPlace": "Philadelphia, PA",

"birthDate": "1979-10-12",

"height": "72 inches",

"gender": "female",

"memberOf": "Republican Party",

"nationality": "Albanian",

"telephone": "(123) 456-6789",

"url": "http://www.example.com",

"sameAs" : [ "https://www.facebook.com/",

"https://www.linkedin.com/",

"http://twitter.com/",

"http://instagram.com/",

"https://plus.google.com/"]

}

</script>

Experts say that if you are interested in schemas as part of your technical SEO, you should start by understanding what schemas your site could include. They also say to focus on the schemas that are particularly well supported by search engines, particularly Google.

Google has published a frequently updated list of the schemas they support-start there.

Once you have your list of content that qualifies for schema, check the required and recommended properties for each type.

To implement schemas, the Yoast SEO plug-in for WordPress has a built-in schema tool. In addition to Yoast, Schema and Structured Data for WP and AMP are other schema plug-ins. Third-party services, like the Schema app, can also be integrated with many different kinds of websites.

Finally, you can deploy Schema via Google Tag Manager if you can’t add any code or plugins to your website.

Once you implement schema

Before you deploy, make sure you test. Google has a Rich Results test tool that allows you to test your pages. You can also paste your schema code directly without publishing it, so you can check for any issues before your implementation goes live.

Once Google picks up your schema, you should see new options in Google Search Console, showing you the Schema types. You can view each of these to see if there are any errors.

You can also check in your search appearance section to see if any of your schema has actually made it to the search results pages, and if so, for what keywords. Not all Schema will result in different-looking search results, but it will help with your technical SEO.

Why canonicalization matters

Canonicalization is critically important to avoid duplicate content issues on your website.

A canonical page is the webpage that search engines consider as the authoritative or preferred version among multiple similar or duplicate pages on a website.

If you have similar pages on your website, indicating which one of those similar pages is canonical can help improve your website’s SEO and help search engine robots avoid wasting time on URLs that you don’t want to show up in search results anyway.

It’s also important in helping search engines understand websites translated into multiple languages.

Why canonicalization is important in technical SEO

When you specify a canonical page, it helps to consolidate link equity. So, rather than splitting link equity between all the different page versions, it directs it toward the original page, boosting it in the search engine results.

It also enhances the user experience by not confusing visitors with multiple pages or strange URLs in the search engine results.

Finally, it improves your site architecture by ensuring that all internal links across a site point to the canonical versions of pages.

Example where to use canonicalization

Suppose you have an online store selling shoes. A single product can be accessed through multiple URLs due to different sorting options, filters, or parameters. Here are some example URLs that might exist for the same product:

https://www.shoestore.com/product/sneaker https://www.shoestore.com/product/sneaker?color=red https://www.shoestore.com/product/sneaker?size=10 https://www.shoestore.com/product/sneaker?ref=homepage https://www.shoestore.com/category/sneakers/product/sneaker

These URLs display the same product with slight variations (colour, size, or URL parameters), but search engines may view them as different pages with duplicate content. This can fragment the ranking signals (backlinks, user engagement) and cause issues with SEO.

To avoid these issues, you would implement a canonical tag in the <head> of the non-canonical versions of the product page to point to a single preferred URL, like this:

<link rel=”canonical” href=”https://www.shoestore.com/product/sneaker”>

Another situation where canonicalization is helpful is when your PDF files are outranking the pages on your site that you actually want to rank. You can use canonical tags here to push the search engines away from the PDF and onto your website instead.

Other canonicalization rules

Every page should have a canonical tag. Most CMSs include this for you, but if you’re working with a custom CMS, it may not.

Don’t include more than one canonical per page; doing so will confuse search engine robots. Finally, include absolute URLs in your canonical tags.

You can determine if you have canonical issues by checking for duplicate content in the website crawl of your technical SEO audit. Most crawlers will include a function to check for this.

Hreflang for international websites

Presenting a website to visitors in multiple languages is more than just offering a Google Translate button on the page.

To help search engines understand your language offerings and the countries you’re targeting, you’ll need to implement hreflang tags.

For example, if you have different content for audiences in English-speaking Canada and the English-speaking United States, you would have hreflang tags for en-CA and en-US.

By indicating which pages should be shown to which people, you avoid situations where someone searches in Spanish and ends up on an English page or vice versa.

Href to avoid duplicate content

Hreflang tags also help you deal with duplicate content.

If you have separate URLs intended for an American and a Canadian audience, hreflang helps the search engine robots understand that it isn’t duplicate. You will also want to set the canonical tag.

Hreflang can be added to the page’s header using HTTP headers or to the XML site map.

Implementing hreflang

There are many CMS plug-ins to help with hreflang. But here are some rules to consider:

1. Use correct language and region codes: The language code is based on the ISO 639-1 standard (two-letter language code). For example: en for English, fr for French and es for Spanish. The region code is optional and based on the ISO 3166-1 Alpha 2 standard (two-letter country code). For example: en-us for English in the United States, fr-ca for French in Canada, es-mx for Spanish in Mexico

2. Self-referencing hreflang tags: Each page that has “hreflang” tags must include a self-referencing tag. This helps search engines identify that this version is intended for a specific audience.

3. Cross-link all language/region versions: For every version of a page in different languages or regions, ensure that all pages link to each other using hreflang tags. This tells search engines how the different versions are related.

4. Attribute x-default for fallback page: Use the x-default attribute to indicate the default page for users whose language/region is not explicitly targeted. This page acts as a fallback when no matching hreflang tag is available.

5. Use full URLs: The href attribute should always use absolute URLs (including the protocol like https://) rather than relative URLs. This helps search engines correctly identify and index the appropriate versions.

6. Ensure canonical tags align: Be cautious when using canonical tags with hreflang. Each page should have a self-referencing canonical tag corresponding to its URL, while the hreflang tags point to alternate language or region versions. Misalignment can confuse search engines and prevent the correct versions from being served.

Implementing hreflang takes a bit of planning, so rely on your technical SEO team to guide you.

Technical SEO for images

Image search is becoming an important part of SEO as the web becomes more visual. Optimizing images for SEO isn’t necessarily highlighted in a crawl, and it can get lost amongst all the other technical SEO issues.

Here are the basics for optimizing your images for SEO.:

- Ensure the file name says what’s in the image

- Use alt text to describe the contents of the image.

- Ensure images are uploaded to the size they will be shown at. If you’re using responsive images, these resize automatically based on the screen size. The srcset attribute can be used to give the browser different image options to choose from, depending upon the visitor’s screen size.

- Compress images to save precious site speed time.

- Consider having an image sitemap if the images often turn up in search results for some of your target keywords. Google has an excellent reference on how to build an image sitemap, and some SEO plugins will be able to generate an image sitemap for you.

- Periodically review and optimize images as new pieces of content are added or older pieces are updated, as website editors often forget to optimize their images properly.

Technical SEO for on-site videos

Search engines have made great strides in understanding video. Based on the video and audio, they can now determine some limited details about the video and associate the content on the page with the video itself.

Despite these enhancements, videos are some of the least accessible content elements to a search engine robot.

On your website, you may want to choose a non-YouTube hosting service so you have more control over how your video is presented.

Transcripts

Next, you’ll need to get video transcripts. Without them, search engines can only understand a limited portion of the video’s content.

If you’re worried that the transcripts will make your page too long or distract from the other information, you can put the transcript in a collapsible element so it’s only shown when visitors request it.

As long as the transcript text is on the page when it is loaded, search engine robots will still consider it when reviewing the page.

Captions

In addition to transcripts, captions are important for those who watch videos on phones with the sound turned off. They also improve accessibility for viewers who are hard of hearing or deaf.

Schema and site maps

Like images, you’ll want to give the search engines lots of information about your video to help them include it in search results.

Each video should have schema markup and follow Google’s implementation guidelines. You should also have a video site map to give extra context to your videos and where they live.

Some plugins can help you build a video site map, or your video provider may have a site map option built into their product.

Site speed

You also want to ensure you’re adding your videos to pages in a way that doesn’t reduce site speed. Typically when a video is embedded, the actual video isn’t downloaded when the page loads, which means the video only starts to stream when someone clicks play.

But if you have an auto-playing video, you might be destroying your site speed as a result. Make sure to run site speed tests on your pages with video embeds to be sure they aren’t negatively impacting your speed.

Make the most of SEO!

As this article shows, the technical aspects of SEO can be complex, so it’s worth investing in an internal resource or finding a digital marketing agency.

If you are looking for someone to help you with basic SEO and writing for search engines, send us a note!